Question

Cisco

Cisco

IN

Cisco

Posted: Jan 19, 2026

Last activity: Feb 2, 2026

Last activity: 2 Feb 2026 14:28 EST

How to use our own LLM/AI Model instead of Pega OOTB AI models?

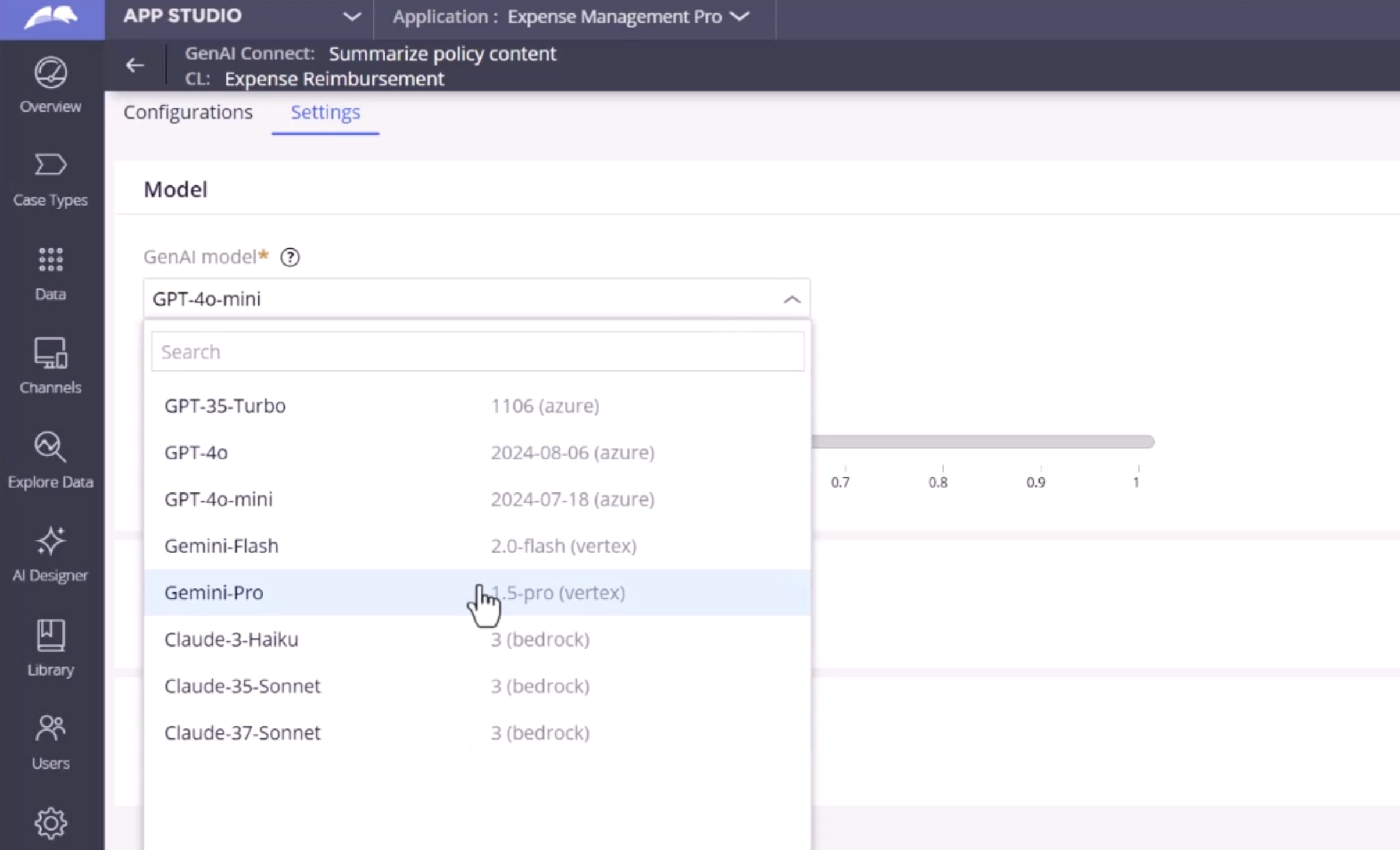

I'm using my pega in on-prem setup. How to use our custom LLM/AI model instead of pega OOTB provided one as below?

Instead of this, how i can leverage the pega to use my own LLM/AI model?

@DharaniM5625

To use your own LLM in an on-prem Pega setup, expose your model as a secure HTTPS REST endpoint (chat/completions style) through your network or an internal gateway.

In Pega, create a GenAI provider/connector that calls this endpoint, set the base URL, headers, auth (API key/OAuth), and any required request/response mapping.

Test the connector to confirm Pega can send prompts and read the generated text back successfully.

Then go to App Studio → GenAI Connect (your use case) → Settings, and select your newly created provider/model from the GenAI model dropdown.

Save and publish so the use case runs through your connector, meaning Pega now routes all GenAI requests to your LLM instead of the ootb models.